In our recent posts on Localising Audiovisual Content, we looked at different types of subtitling, voice-over and dubbing; why and when they are used. Today we’d like to share some best practices for setting up a subtitling project and discuss workflows during the project.

Before the project starts: best practices

We know that the audiovisual industry is fast-paced and constantly changing, so we are as flexible as possible in order to accommodate client requirements – but we are also honest and transparent when something can have a negative impact on quality.

In an ideal world:

- The video to be subtitled is finished: the subtitler works with the final version of the video, and with the definitive audio track, on-screen titles, film editing, and frame-rate (number of images per second). This is ensures the subtitler can take all the necessary variables into account, including the final shot changes, so that the content and the timing of the subtitles are as accurate as possible. Consequences of working with the unfinished product can be really serious: for example, the time-coding of the subtitles becomes useless if the video is compressed to a different frame rate!

- Not only is the video finished, but the subtitler has access to it: confidentiality is obviously a consideration, but if the subtitler can’t see what is happening on the screen because the image is excessively water-marked, the quality will be compromised.

- There is time to do the job: usually subtitling one minute of audio takes between 10 and 20 minutes, though of course it can take more or less time depending on terminology, how much speech there is, etc. In other words, subtitling a 110-minute film takes at least 3 working days.

- We know where and when it is going to be published, and which audience it is targeting: this helps us comply with audience’s expectations. For example, the type of language, whether subtitling for the deaf and hard of hearing is required, what reading speed can the viewers cope with, and so on.

- Subtitlers have access to a script or transcript (if available) and previously translated material: having access to a script can make turnaround faster, and access to previous translations can help with consistency.

- The delivery format is specified: subtitles can be delivered separately to the video, using text file formats such as SRT or they can be burnt in the video file. The first is the most common delivery format nowadays, for DVDs, online video players and broadcast captioning. The latter gives you more control over formatting, but the video must be good quality so that the subtitles are legible.

- There are source files available: for projects that require the modification of embedded graphics, it’s vital that there are editable source files available to make the process less costly – otherwise graphics may need to be recreated from scratch.

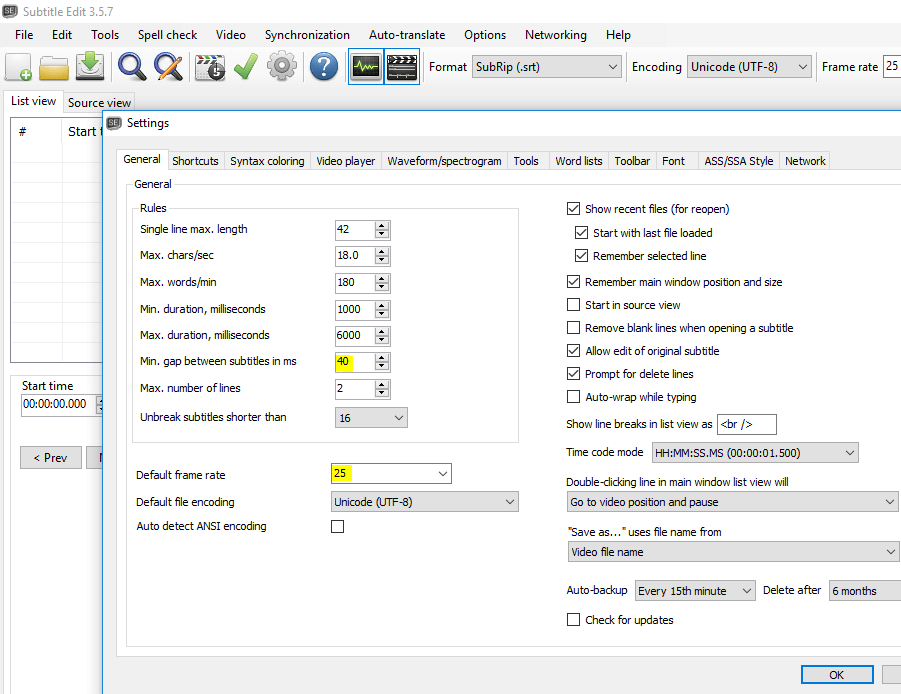

- There are guidelines available: These guidelines will include information on some of the points we have been talking about in previous posts: reading speed, character limits, timing of frames and shot changes, line breaks, etc. If our client doesn’t provide a set of guidelines, we provide our freelancers with guidelines based on the information we have and the current mainstream standards. If you want a glimpse of what a set of guidelines can look like, check out the BBC’s subtitling guidelines.

During the project: workflows

The workflow of a subtitling project can vary depending on the languages into which the video is being subtitled.

For interlingual subtitles into only one language, the video file and any available reference materials (transcript, script, previous translations) are sent to a subtitler, who provides the translated and time-coded subtitles keeping into account line breaks and reading speed. Then the subtitler returns the subtitles in an SRT file, and these are sent together with the video file and the reference materials to a QA checker, who ensures the quality of the subtitles.

For interlingual subtitles into different languages, however, it can be more cost-effective and less time-consuming to prepare a “template” – a file with the time-codes that can be used by all the subtitlers for the different languages. In that case, the first step is to send the video file to a professional spotter who creates the time-codes, either with a source language transcript or leaving the subtitle content empty. The spotter delivers a time-coded SRT file, which is sent to the subtitlers for the different languages. They will then fill in the subtitles with translations that keep into account line breaks and reading speed. Finally, a QA checker for each language reviews the file.

For both workflows, at the end of the project our project manager checks that the subtitles display correctly and, if everything looks good, delivers them to the client.

We hope that this series on Localising Audiovisual Content has been useful! Please check our new Audiovisual Translation Glossary for a quick reference to everything that we’ve covered in this series!

3 April 2020 11:21